In information geometry, statistical data take values in sets equipped with a Riemannian structure, with finite or infinite dimension. The estimators of quantities relating to these data are averages, medians, or more generally p-averages of these data. Stochastic algorithms to find these p-averages are very useful for all of the practical applications that have been developed in the publications listed below. It may be mentioned in particular applications in processing stationary radar signals. A very important issue is to consider non-stationary signals. To this end, we need to work on paths spaces in Riemannian manifolds, and develop a good notion of metric, distance, and average for these paths.

New stochastic algorithms of Robbins-Monro type have also been proposed to efficiently estimate the unknown parameters of deformation models. These estimation procedures are implemented on real ECG data to detect cardiac arrhythmia problems.

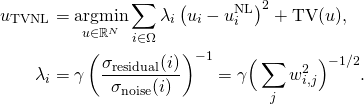

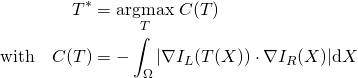

Proximal methods have been extremely successful in image processing to provide efficient algorithms to compute the solutions of the considered problems. A major theme of the team is the study of the convergence of such algorithms, their speed, and robustness to errors.